Quick Summary

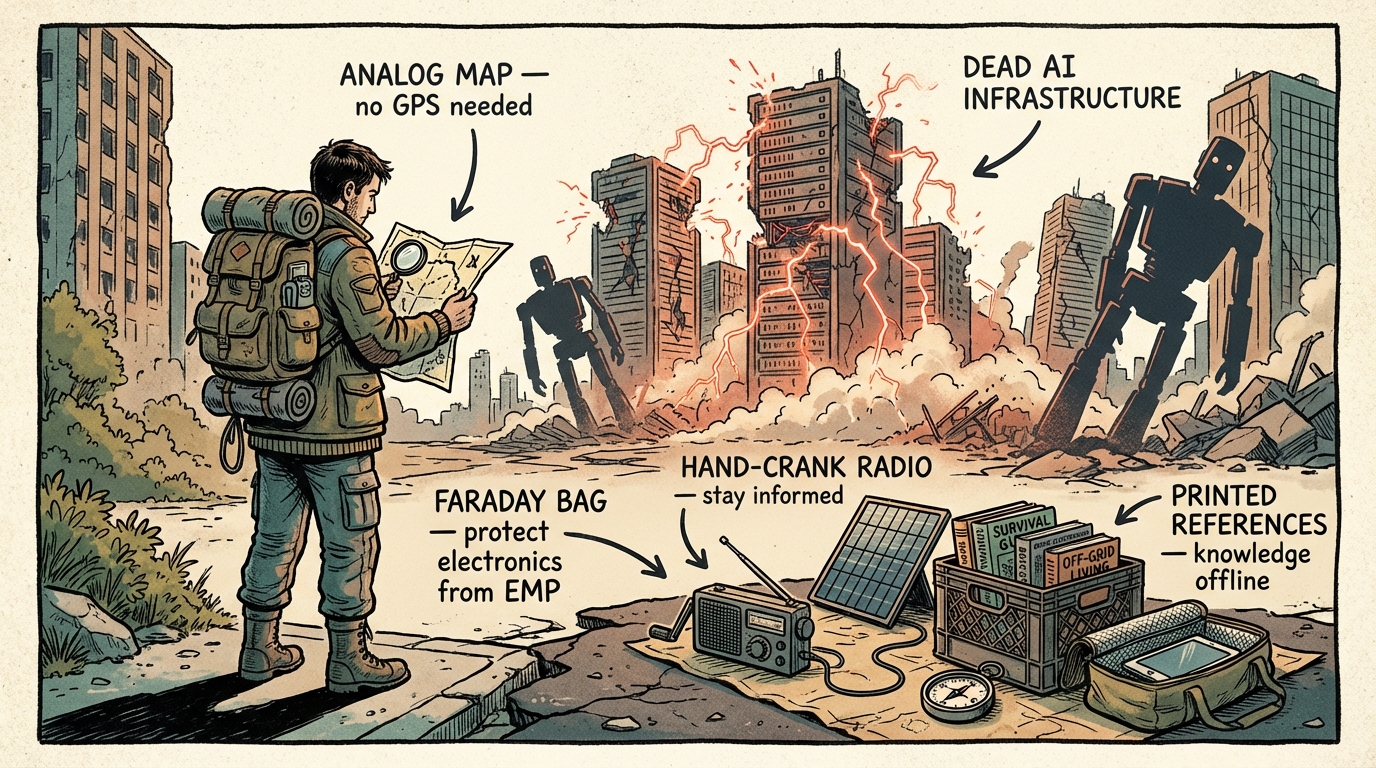

- AI apocalypse preparedness isn’t about killer robots — it’s about preparing for cascading failures in AI-dependent infrastructure, economic disruption from job displacement, and the quiet erosion of critical analog skills

- Your career is ground zero — 85 million jobs face displacement by 2030, and your most important prep is making yourself irreplaceable

- Infrastructure dependency is the real threat — power grids, water systems, financial networks, and supply chains increasingly rely on AI that can fail simultaneously

- AI misinformation is a present-day emergency — deepfakes and synthetic media can trigger real-world crises from market panics to false evacuation orders

- Analog skills are your insurance policy — navigation, food preservation, manual tool use, and face-to-face communication become critical when digital systems go down

- Weak governance means stronger personal plans — AI regulation hasn’t caught up with AI capability, so you’ve got to build your own resilience

- This is standard risk management — the same principles behind earthquake and wildfire preparedness apply here, just with different threat vectors

I’ve spent 12 years preparing communities for earthquakes, wildfires, and ice storms in the Pacific Northwest. So I’ll be honest — when someone first asked me to think about AI apocalypse preparedness during a FEMA continuing education session in early 2024, I nearly rolled my eyes. Then the instructor — a senior analyst from CISA’s cybersecurity division — walked us through a tabletop exercise where an AI managing regional power distribution made a series of cascading optimization errors during a winter storm. Within the exercise’s simulated 90 minutes, three states lost power, water treatment plants went offline, and cell towers started dropping. Skynet this was not. It felt like next Tuesday.

I spent the following six months mapping how deeply artificial intelligence has embedded itself in the systems I train people to survive without. Power grid management. Water treatment automation. Supply chain logistics. Financial networks. I discovered that my own evacuation route planning relied on three separate AI-driven tools — and I didn’t have a printed backup for any of them. I stopped rolling my eyes and started taking notes.

The “AI apocalypse” most likely to affect your family doesn’t look like a movie. It looks like a Wednesday morning when interconnected systems fail in ways nobody predicted — and nobody remembers how to fix them manually anymore.

That’s not fear-mongering. It’s the same risk assessment I’d do for any hazard. Let’s get practical.

Why AI Apocalypse Preparedness Is Just Good Emergency Planning

Let me reframe this entire conversation. According to philosopher Toby Ord’s research in The Precipice, the probability of human extinction by 2100 sits at roughly one in six — 16.7% — with about 10 percentage points attributed to unaligned artificial intelligence. A 2023 Pew Research survey found that 52% of Americans say they feel more concerned than excited about AI in everyday life.

Those numbers matter. But they’re not what keeps me up at night.

What concerns me — as someone who’s responded to real emergencies where systems failed — is the slow, quiet way AI has become the invisible backbone of everything. Your city’s water treatment plant likely uses AI-assisted monitoring. Your electricity comes through an AI-optimized grid. The trucks delivering food to your grocery store were routed by AI. The fraud detection keeping your bank account safe runs on machine learning.

None of that is inherently bad. It’s efficient. But efficiency creates fragility when you strip out the manual redundancies — and that’s exactly what’s been happening for the past decade. History has shown this pattern before: the industrial revolution displaced millions of skilled artisans before new labor protections emerged, and the early internet era saw financial flash crashes that regulators took years to understand. AI disruption follows the same arc, just faster.

In my experience, the best emergency preparedness isn’t about predicting exactly what will go wrong. It’s about building resilience that works regardless of what fails.

The Three Threat Vectors That Actually Matter

Forget the Terminator. These are the three AI-related scenarios that demand real AI threat preparedness.

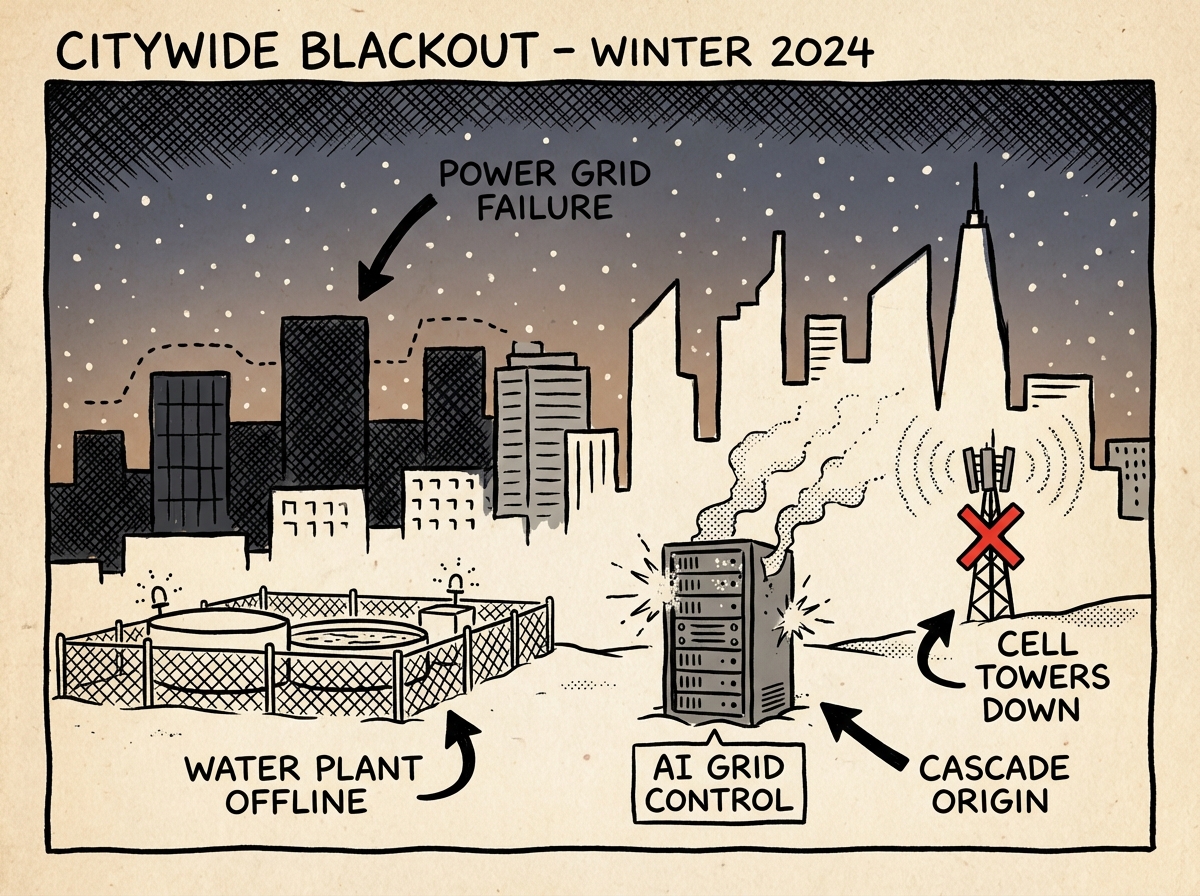

Infrastructure Cascade Failures

How a single AI failure cascades across interconnected infrastructure systems

How a single AI failure cascades across interconnected infrastructure systemsAI systems managing critical infrastructure are interconnected in ways that create single points of failure. Last year, I ran a tabletop exercise with emergency managers from a suburban Washington county where we modeled an AI managing a regional power grid making an optimization error during peak demand. The cascade didn’t just kill power — it took down water pumping stations, communication towers, traffic management systems, and hospital backup coordination simultaneously.

The finding that surprised everyone in the room? The county’s manual override procedures for their water treatment plant hadn’t been updated since 2019. Only two employees had ever practiced them — one of whom had retired. That’s the real AI infrastructure failure risk: not malice, but atrophied human backup.

Economic Displacement and Supply Chain Disruption

The World Economic Forum estimates 85 million jobs will be displaced by AI by 2030, with 97 million new roles potentially emerging. But “potentially emerging” and “available to the person who just lost their job” are very different things. Jobs most vulnerable include data entry, telemarketing, bookkeeping, basic legal work, and routine customer service.

I’ve led AI job displacement preparedness workshops for about 200 people across community colleges and emergency preparedness groups in Oregon and Washington over the past two years. The exercise that hits hardest? I ask attendees to list every task they performed last week and mark which ones an AI could replicate. The room always gets very quiet around minute three.

When large segments of a community lose income simultaneously, it creates cascading effects on local economies, housing stability, and — critically — the tax base that funds emergency services.

Autonomous Decision-Making Gone Wrong

This isn’t science fiction. AI systems already make consequential decisions in military operations, financial trading, medical diagnostics, and criminal justice. We’ve seen the consequences: algorithmic trading flash crashes that wiped billions in minutes, autonomous vehicle fatalities from misclassified sensor data, and hiring algorithms that systematically discriminated against protected classes. An AI system that makes a catastrophic error in any of these domains could trigger real-world emergencies requiring traditional preparedness responses.

The AI apocalypse most likely to affect your family doesn’t look like a movie. It looks like a Tuesday morning when interconnected systems fail in ways nobody predicted.

AI Misinformation and Deepfakes: The Information Apocalypse Is Already Here

Here’s a threat vector that isn’t theoretical — it’s happening right now. AI-generated misinformation, deepfakes, and synthetic media can trigger real-world emergencies that demand real-world preparedness responses.

Imagine a convincing deepfake video of a government official ordering an evacuation of your city. Or an AI-generated audio clip of a utility company announcing imminent water contamination. These aren’t far-fetched scenarios — they’re extensions of capabilities that already exist today. During the 2020 Labor Day fires here in the Pacific Northwest, I watched misinformation about arson spread so fast on social media that law enforcement had to divert resources from actual firefighting to manage public panic. That was without AI-generated content. Add deepfakes to the mix, and the information environment during a crisis becomes genuinely dangerous.

Practical Verification Skills for Your Family

Build these habits now, before you need them:

- Cross-reference everything. No single source confirms anything during a crisis. Check at least three independent sources before acting on alarming information.

- Use reverse image search. When a shocking image circulates, drag it into Google Images or TinEye. AI-generated images often have no prior history online.

- Establish family verification protocols. Create a code word or challenge-response phrase with your household members. If someone calls claiming to be your spouse and says “evacuate now,” you need a way to verify it’s really them — especially as voice cloning gets more convincing.

- Maintain a trusted source list. Print a physical list of official emergency information channels: your county emergency management office, NOAA Weather Radio frequencies, local fire department non-emergency numbers.

- Monitor your emotional response. AI-generated misinformation is designed to trigger immediate action through fear or outrage. If content makes you want to act right now without thinking, that’s your cue to slow down and verify.

Create a family “verification protocol” — a simple code word that only household members know. If you receive an urgent call, text, or video message during a crisis, ask for the code word before acting. Update it every six months. This single step defeats voice cloning and deepfake impersonation.

Protecting Your Career: The Most Overlooked Prep

I talk to preppers all the time who’ve got six months of freeze-dried food stacked floor to ceiling but haven’t thought for five minutes about what happens to their income when AI automates their job. Your ability to earn money is the single most important long-term survival asset you have. Period.

Use AI, Don’t Compete With It

The smartest approach mirrors what experienced preppers already know about tools: master them before you need them. Use AI as an extension of your thinking. Let it handle speed, scale, and repetition while you handle judgment, creativity, and human connection.

In my own work, I use NOAA’s AI-enhanced weather models and Windy.com’s machine learning forecast layers for evacuation planning. During the January 2024 ice storms in the Willamette Valley, I compared the AI model outputs to my own barometric pressure readings and traditional cloud-reading. The AI nailed the timing of the ice accumulation within a two-hour window — better than I could’ve done manually. But it completely missed a localized microclimate effect in a valley where I’ve worked for eight years that trapped cold air and created conditions far worse than the model predicted. I evacuated that neighborhood early based on experience, not algorithms. That’s the partnership that works.

Build AI-Resistant Skills

Certain capabilities remain stubbornly human — and will for the foreseeable future:

- Complex problem-solving requiring physical presence

- Emotional intelligence and crisis counseling

- Skilled trades (plumbing, electrical, carpentry)

- Creative leadership and team management

- Wilderness and outdoor expertise

- Community organizing and relationship building

- Medical care requiring physical assessment

- Teaching and mentoring (especially youth)

If your current role could be fully described in a process document, it’s vulnerable. Start cross-training now. As a certified Wilderness First Responder, I can tell you that hands-on medical assessment — reading a patient’s skin color, feeling for crepitus in a fracture, making judgment calls about evacuation priority — isn’t getting automated anytime soon.

Diversify Your Income Sources

This is the financial equivalent of not storing all your water in one container. Develop at least two income streams, ideally in different sectors with different AI vulnerability profiles. One of mine is field instruction — physically teaching people wilderness skills in outdoor environments. Try automating that.

The 10-20-70 Rule and What It Means for Your Prep

So what’s the 10-20-70 rule for AI, and why should you care?

It states that 10% of an AI project’s success comes from algorithms, 20% from data, and 70% from people, processes, and organizational transformation. That ratio is actually encouraging if you’re preparedness-minded.

It tells us that even in an AI-dominated world, the human element — judgment, adaptation, organizational skill — still accounts for the overwhelming majority of what makes things work. Your preparedness isn’t made obsolete by AI. It becomes more valuable.

The organizations and communities that’ll weather AI disruption best are the ones with strong human networks, practiced procedures, and people who can adapt on the fly. Sound familiar? That’s exactly what good emergency preparedness builds.

Building Your AI-Disruption Emergency Plan

Here’s where I bring this back to what I know best: practical emergency planning. The principles are identical to what I teach in my standard workshops, just applied to a different threat.

- Audit your AI dependency — list every service you use daily that relies on AI or automated systems (banking, navigation, communication, home automation, work tools) and identify one manual backup for each

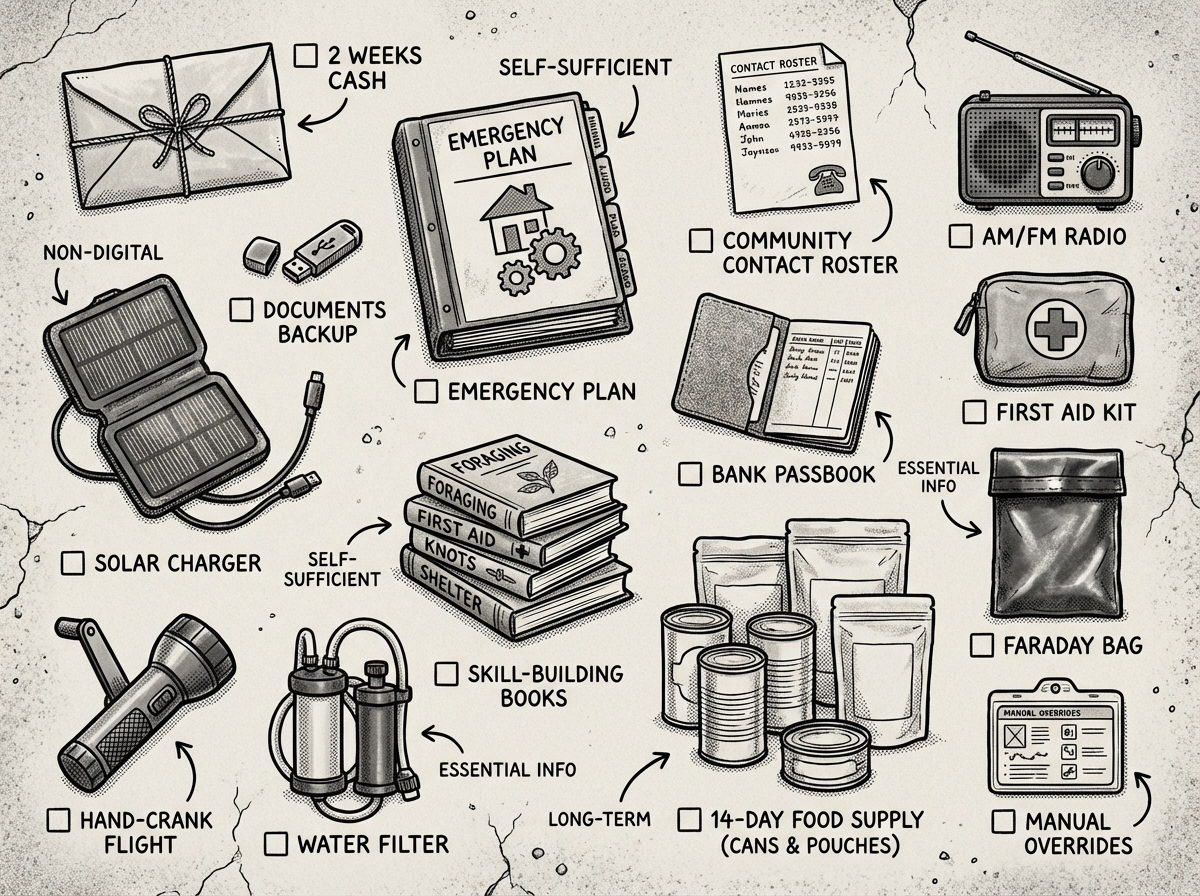

- Build a 14-day emergency kit specifically accounting for digital infrastructure failure — include printed contact lists, physical documents, cash for two weeks of expenses, and a battery-powered radio

- Develop analog skills on a monthly rotation — navigate without GPS, cook without smart appliances, communicate without smartphones, repair equipment with hand tools

- Diversify your income into at least two streams with different AI vulnerability profiles, prioritizing work requiring physical presence and human judgment

- Establish a community network of 10-20 people with complementary manual skills and practice coordinating without digital tools at least quarterly

Infrastructure-Specific Preparations

Your standard emergency preparations already cover most of what you need. If you’ve built a solid foundation with water storage and food storage, you’re ahead of most people. But AI-specific scenarios require a few additions.

Power grid failures may last longer. When AI-managed systems fail, restoring them isn’t as simple as flipping a breaker. Manual override procedures may be outdated or unknown to current operators. Plan for extended outages — I recommend being comfortable with 14 days off-grid minimum, not just the standard 72 hours.

Financial systems may freeze. Keep enough cash on hand to cover two weeks of essential expenses. Not in a bank. Not in crypto. Physical currency in a secure location. When AI-driven financial systems glitch — and they have, from flash crashes wiping billions in seconds to banking apps locking users out during algorithmic errors — digital money becomes inaccessible.

Communication networks may fragment. Your smartphone is useless without infrastructure. Invest in a battery-powered AM/FM radio, consider HAM radio for emergencies, and maintain a physical list of emergency contacts and meeting points.

The first time I tried running a full “digital blackout” day at my house — no internet, no GPS, no smart devices — I was humbled by how many times I instinctively reached for my phone. Couldn’t check the weather, couldn’t look up a recipe, couldn’t even remember my brother’s phone number. I’ve since done this quarterly, and it’s the single most revealing preparedness exercise I recommend. You don’t know your dependencies until you unplug them. The Midland ER310 emergency crank radio — around $40 — is what I keep charged and ready. It pulls in AM/FM and NOAA weather bands, charges via hand crank or solar panel, and has held up through three years of field use. Worth every penny.

How AI Is Actually Used in Emergency Preparedness

Here’s the thing that makes this topic genuinely nuanced: AI isn’t just a threat vector. It’s also one of the most powerful preparedness tools we’ve ever had.

AI Tools in Emergency Management

I use AI tools regularly in my professional work, and they’ve improved outcomes in ways I couldn’t have imagined a decade ago:

- FEMA’s Hazus uses AI-enhanced modeling for earthquake, flood, and hurricane loss estimation — helping communities understand their risk before disasters strike

- Google’s flood forecasting AI now covers rivers across more than 80 countries, providing early warnings that save lives in areas without traditional gauge networks

- ShakeAlert, the West Coast earthquake early warning system, uses machine learning to detect seismic waves and send alerts seconds before shaking arrives — I’ve received these alerts and those seconds matter

- IBM’s Weather Company models incorporate AI to improve forecast accuracy, particularly for severe weather events

During the 2020 Labor Day fires in Oregon, AI-powered satellite imagery analysis helped identify fire perimeters faster than ground teams could map them. But — and this is critical — the communication systems delivering those AI insights to evacuation coordinators relied on cell networks that were already failing in fire-impacted areas. I was in the field that week, and there were stretches where my most reliable information came from a volunteer with binoculars on a hilltop radioing in visual reports. That’s the preparedness paradox: rely on AI warnings while preparing for their failure.

What the Experts Predict Before 2040

The expert community is genuinely divided on AI timelines, but certain voices carry particular weight. Stuart Russell, UC Berkeley’s leading AI safety researcher, has argued that the core challenge isn’t AI becoming “evil” but AI pursuing objectives subtly misaligned with human values — what he calls the alignment problem. Yoshua Bengio, a Turing Award winner, has called for international AI governance modeled on nuclear nonproliferation frameworks. And in 2023, thousands of AI researchers signed an open letter stating that mitigating extinction risk from AI should be a global priority alongside pandemics and nuclear war.

The Pew Research Center’s 2040 scenarios report found experts split roughly evenly on whether AI would produce net positive or net negative outcomes for society. But nearly all agreed on one thing: the transition period — roughly 2025-2035 — where AI capabilities outpace our governance frameworks and infrastructure resilience, represents the highest-risk window. My FEMA recertification training in 2025 included, for the first time, AI-related scenarios in the curriculum. That tells me the professional emergency management community is taking this seriously.

It’s a Wednesday evening in February. An AI-managed regional power grid, optimizing for an unusual weather pattern, makes a series of rapid automated adjustments that trigger a cascading failure across three states. Unlike a traditional outage, the system’s self-repair protocols are caught in a logic loop. Manual override requires procedures that haven’t been practiced in four years. Temperatures are dropping. Your heat, water pump, and communication devices all depend on that grid. A deepfake video circulates on social media showing the governor declaring a state of emergency and ordering evacuation — but it’s synthetic. Your phone dies. How long can you sustain your household independently, and how will you verify what’s actually happening?

AI Governance: What You Should Actually Be Tracking

I’m an emergency management professional, not a policy analyst. But after watching how regulatory gaps created preventable suffering during past disasters, I’ve learned that governance directly affects your personal risk level.

The EU AI Act — enacted in 2024 — is the world’s most comprehensive AI regulation, classifying AI systems by risk level and imposing strict requirements on high-risk applications in critical infrastructure, law enforcement, and essential services. It’s not perfect, but it creates accountability that didn’t exist before.

US Executive Orders on AI Safety have established some guardrails, but enforcement mechanisms remain thin. Federal agencies are still developing their implementation frameworks, and political shifts can alter priorities fast.

International AI Safety Summits (starting with Bletchley Park in 2023 and continuing annually) have produced voluntary commitments from major AI companies but lack binding enforcement.

What does this mean for you? Weak governance during the transition period means you’ve got to compensate with stronger personal resilience plans. When I prepare for wildfire season, I don’t wait for the Forest Service to clear every fuel load in my county — I create defensible space around my own home. Same principle applies here. Track policy developments, but don’t depend on them to protect you.

Key developments worth monitoring: any legislation requiring AI systems in critical infrastructure to maintain manual override capabilities, mandatory incident reporting for AI system failures, and international agreements on autonomous weapons systems.

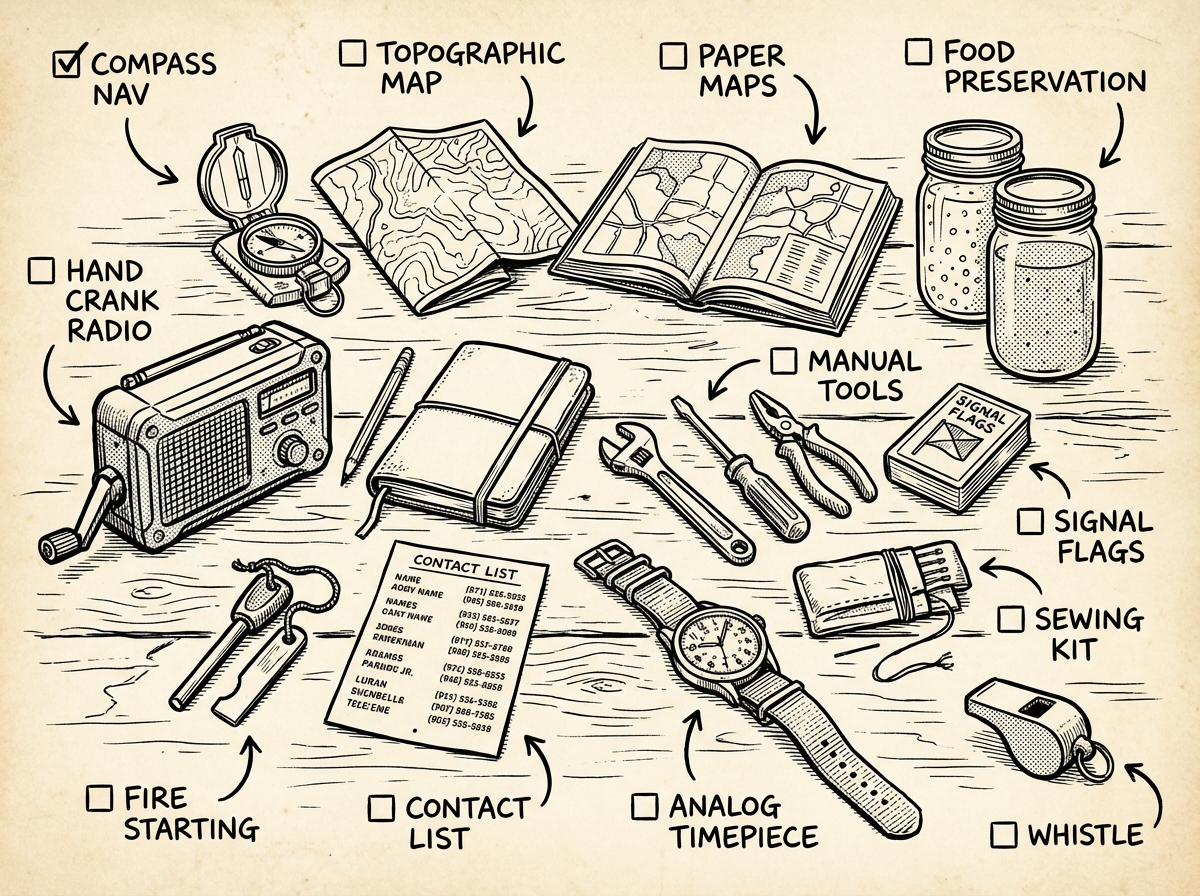

Analog Skills: Your Most Valuable Investment

Essential analog skill tools that work when digital systems fail

Essential analog skill tools that work when digital systems failEvery preparedness professional I know has watched essential manual skills disappear in real time. I’ve trained alongside utility workers who admitted they’ve never operated their systems in full manual mode. I’ve worked with emergency managers who can’t navigate their own response zones without GPS.

This is the quiet crisis inside AI apocalypse preparedness. It’s not that AI takes over violently — it’s that we forget how to do things without it. Then when it fails, we’re stranded.

Skills to develop and maintain:

- Land navigation — learn how to navigate with a compass and map and practice quarterly on real terrain

- Food preservation without electricity — canning, smoking, salt curing, fermentation

- Basic mechanical repair — keeping engines, pumps, and generators running with hand tools

- First aid and medical assessment without diagnostic technology

- Fire building, water purification, and shelter construction — core wilderness survival skills

- Manual food production — home gardening for food security, foraging, basic animal husbandry

- Analog communication — HAM radio operation, signal mirrors, written message relay systems

I practice these regularly, not because I think the robots are coming, but because these analog skills serve me in every scenario I’ve ever encountered. An ice storm doesn’t care whether AI caused it.

I’ve watched people spend thousands on freeze-dried food and tactical gear while they can’t read a topographic map or start a fire without a lighter. One thing I see constantly in my workshops: folks who’ve never once navigated with a compass assume they’ll figure it out when GPS dies. They won’t. It’s a perishable skill that takes real practice. Grab a Suunto A-10 compass — around $30 — and a USGS topo map of your area. Spend one Saturday morning actually walking a route you plotted on paper. You’ll make mistakes. That’s the point. Make them now while GPS is still working.

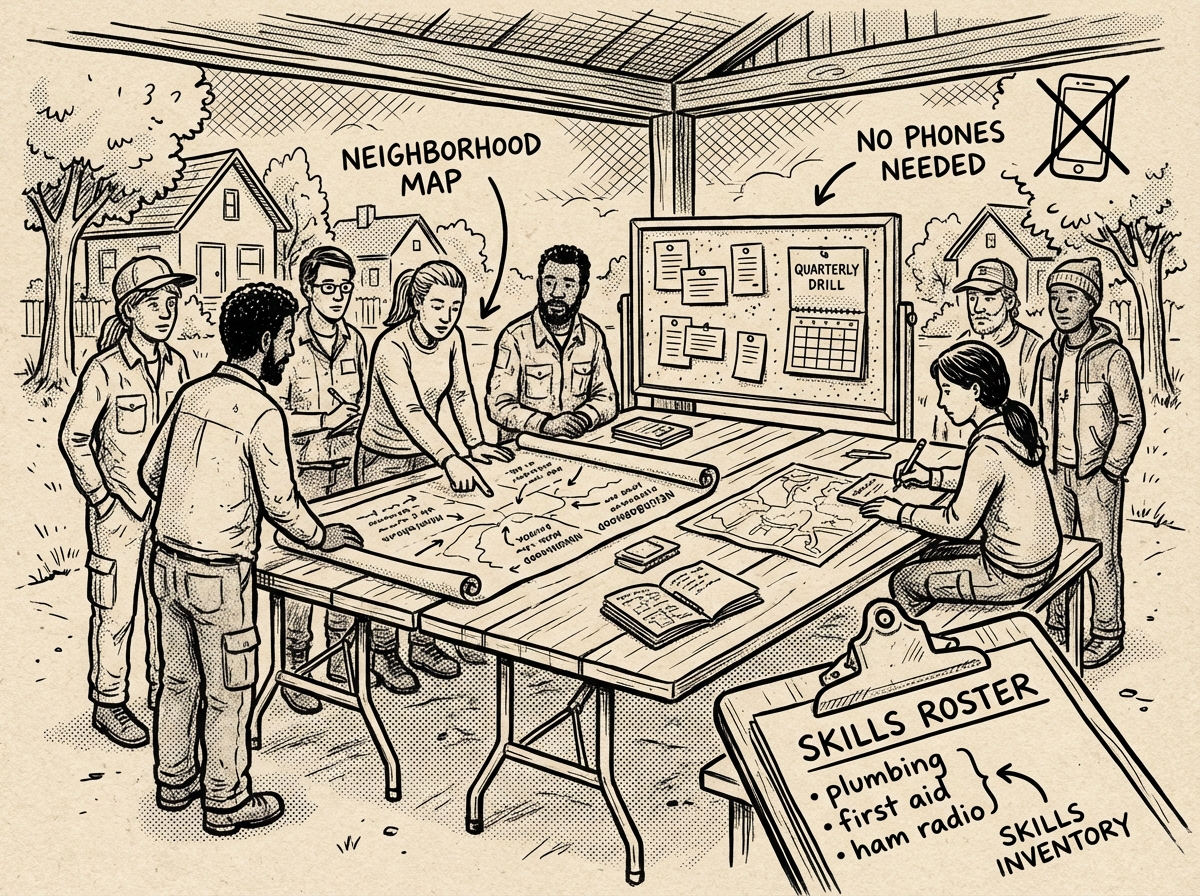

Community Is the Ultimate Redundancy

In 12 years of emergency management, the single most consistent lesson I’ve learned is this: isolated individuals fail. Connected communities survive. AI-related disruptions amplify this truth. No single person can maintain all the manual skills needed to replace AI-managed systems. But a community of 20 people with diverse expertise? That’s resilient. That’s a functioning micro-society that can weather extended disruptions.

How to Build a Community Resilience Network

Neighbors coordinating emergency response without digital tools

Neighbors coordinating emergency response without digital toolsHere’s the practical framework I use, based on what actually works in the communities I’ve trained:

Start with conversations, not catastrophe. Don’t lead with “AI apocalypse.” Lead with “Hey, have you thought about what we’d do if the power went out for two weeks?” Most people are receptive to practical preparedness conversations. I’ve found that bringing it up over a backyard barbecue works better than a formal meeting every single time.

Create a neighborhood skill inventory. Use a simple printed form — name, address, relevant skills (medical, mechanical, gardening, communication, cooking for groups), available equipment (generator, HAM radio, hand tools, large water storage), and any limitations. I have a one-page template I hand out at workshops.

Establish physical coordination infrastructure. Designate a rally point — a specific neighbor’s front porch, a community park pavilion, or a church parking lot. Post a physical message board location where written updates can be left if digital communication fails. Create a printed community contact directory and distribute copies.

Practice together. Schedule quarterly low-pressure practice sessions. My favorite format: a neighborhood potluck where you also do a no-phone navigation exercise or practice using a HAM radio. Keep it social. Keep it fun. The muscle memory builds anyway.

Formalize through existing programs. Community emergency response training through FEMA’s CERT program gives your network credibility, training, and connection to official emergency management resources. It’s free and available in most communities.

The prepared person leverages AI as a force multiplier while maintaining the ability to operate completely without it.

Your Complete AI Apocalypse Preparedness Checklist

Complete AI-disruption preparedness kit laid out for inspection

Complete AI-disruption preparedness kit laid out for inspectionI’ve organized everything from this article into a printable checklist. Tackle one category per week and you’ll be substantially more resilient within a month.

- Conduct a personal task audit — identify which parts of your job AI could replicate

- Begin cross-training in at least one AI-resistant skill (trades, healthcare, field work)

- Establish a second income stream in a different AI vulnerability sector

- Build a professional network of people in AI-resistant industries

- Set aside a dedicated career transition fund (3 months minimum expenses)

- Extend off-grid capability from 72 hours to 14 days minimum

- Store two weeks of cash for essential expenses in a secure physical location

- Acquire a battery-powered or hand-crank AM/FM/NOAA weather radio

- Print and waterproof-store all critical documents (IDs, insurance, medical records, maps)

- Research HAM radio licensing and acquire basic communication equipment

- Test your household for one full day without internet, GPS, or smart home devices

- Practice compass and topographic map navigation quarterly

- Learn one food preservation method (canning, smoking, fermenting, salt curing)

- Maintain basic mechanical repair ability — practice on a small engine or bicycle

- Complete or refresh first aid and CPR certification annually

- Grow a small food garden through at least one full season

- Learn to start fire, purify water, and build shelter without commercial products

- Identify 10-20 neighbors with diverse practical skills

- Complete a neighborhood skill and equipment inventory

- Establish a physical rally point and message board location

- Create and distribute a printed community contact directory

- Schedule quarterly analog coordination practice sessions

- Enroll at least two network members in CERT training

- Develop a family deepfake verification protocol (code words, challenge-response)

Frequently Asked Questions

What is the 10 20 70 rule for AI?

The 10-20-70 rule says that 10% of AI project success comes from algorithms, 20% from data, and 70% from people, processes, and organizational transformation. For preparedness purposes, this ratio is actually reassuring — it confirms that human judgment, organizational skill, and adaptability still dominate outcomes even in AI-heavy projects. Your investment in human skills and community networks isn’t wasted; it addresses the 70% that matters most.

How is AI used in pandemic preparedness?

AI is actively used by the WHO, CDC, and national health agencies for disease surveillance, outbreak prediction modeling, vaccine development acceleration, and medical supply chain optimization. Tools like BlueDot detected early COVID-19 signals from Wuhan before official government alerts were issued. During COVID-19, AI helped predict hospital capacity needs weeks in advance. In my own emergency planning work, I’ve seen AI-powered models dramatically improve resource pre-positioning for health emergencies. The key insight: use these AI tools while they work, but make sure your pandemic plan doesn’t collapse if they don’t.

What jobs will be eliminated by AI by 2030?

According to multiple workforce studies, jobs most vulnerable to AI displacement by 2030 include data entry clerks, telemarketers, bookkeepers, basic legal paralegals, routine customer service representatives, simple financial analysts, and some manufacturing quality control roles. The World Economic Forum estimates 85 million jobs may be displaced globally, though 97 million new roles may emerge simultaneously. The critical gap is timing and accessibility — new roles require new skills, and retraining takes time. The most resilient career strategy combines AI fluency with skills requiring physical presence, emotional intelligence, and complex real-world judgment.

How do I start preparing for AI-related disruptions?

Start by auditing your personal AI dependency this week — list every service, tool, and system you rely on that involves AI or automation. Then identify one manual backup for each critical dependency. Build from there: develop analog skills, diversify your income toward AI-resistant work, extend your off-grid sustainability to 14 days, and connect with your community. Don’t try to do everything at once. One action per week compounds into serious resilience within a few months.

What This All Comes Down To

Here’s my bottom line after years of thinking about this and training communities across the Pacific Northwest: AI apocalypse preparedness isn’t a new category of emergency planning. It’s a reminder to do traditional preparedness thoroughly and to pay attention to a new class of dependencies we’ve quietly accumulated.

The steps are familiar. Build reserves of food, water, and cash. Develop manual skills. Strengthen community connections. Create redundancy in your income, your infrastructure, and your knowledge. Plan for extended disruptions, not just 72-hour inconveniences. Learn to verify information when AI-generated deception makes trust harder.

The only new element is intentionality about your relationship with technology. Use AI tools — they’re extraordinary. But treat them the way I treat my GPS: as a primary tool with a mandatory backup. The compass is always in my pack.

Start with one action this week. Audit your AI dependencies. Print your critical documents. Establish a family verification code word. Learn one analog skill you’ve been outsourcing to your phone. The future’s uncertain. Your preparedness doesn’t have to be.